Code Evolution in the Wild

The Engineering Behind Self-Improving Code

How Do We Create Software?

Three Paradigms

Hand-Coding

Human writes every line.

Slow, predictable, brittle.

👥 5–10 engineers

✗ Doesn't scale to complexity

"Vibe Coding"⚠

LLM generates code.

Fast, unreliable, hallucinates.

👥 1 engineer

✗ No performance guarantees

Code Competes to Survive

Mutation, selection, iteration.

Emergent, adaptive, measurable.

👥 1 evolution engineer

✓ Human-readable output

What Is Code Evolution?

Natural Selection — Applied to Algorithms

🧬 Biological Evolution

💻 Code Evolution

Same algorithm. Different substrate.

DNA → Source code · Predator → Opponent · Generations → Rounds

Evolutionary Paradigms in Software

Biology rejected two of these. Code doesn't have to.

Darwinian

Random mutation + natural selection.

LLM proposes random code changes → fitness test → accept if better.

Lamarckian

Acquired traits are inherited (learning passes to offspring).

LLM reflection loop: "I failed because X" → next mutation avoids X.

Orthogenesis

Evolution has direction (mutations are guided, not random).

Constrained mutation space: "only mutate formation logic, preserve message protocol."

Biology rejected Lamarck and Orthogenesis.

But in code, we can inherit learned behaviors. We can guide mutation.

Evolution is a tool, not a dogma.

SwarmEvolve: The Test Arena

Autonomous Drone Swarms — Where Code Fights to Survive

🔴 Team A (final champion, 204 LOC) vs 🔵 Team B (baseline, 66 LOC) — Round 1

⚔️ Rules of the Game

Key tension: Information asymmetry (hidden cooldowns) + coordination (message protocol) = emergent swarm tactics.

The Experiment

How We Set Up the Evolutionary Loop

🔵 Blue Fleet vs 🔴 Red Fleet — Simulated engagement

Fully automated: 95 rounds · ~90 minutes · ~$10 in API credits · Zero human intervention after launch.

Experiment Setup

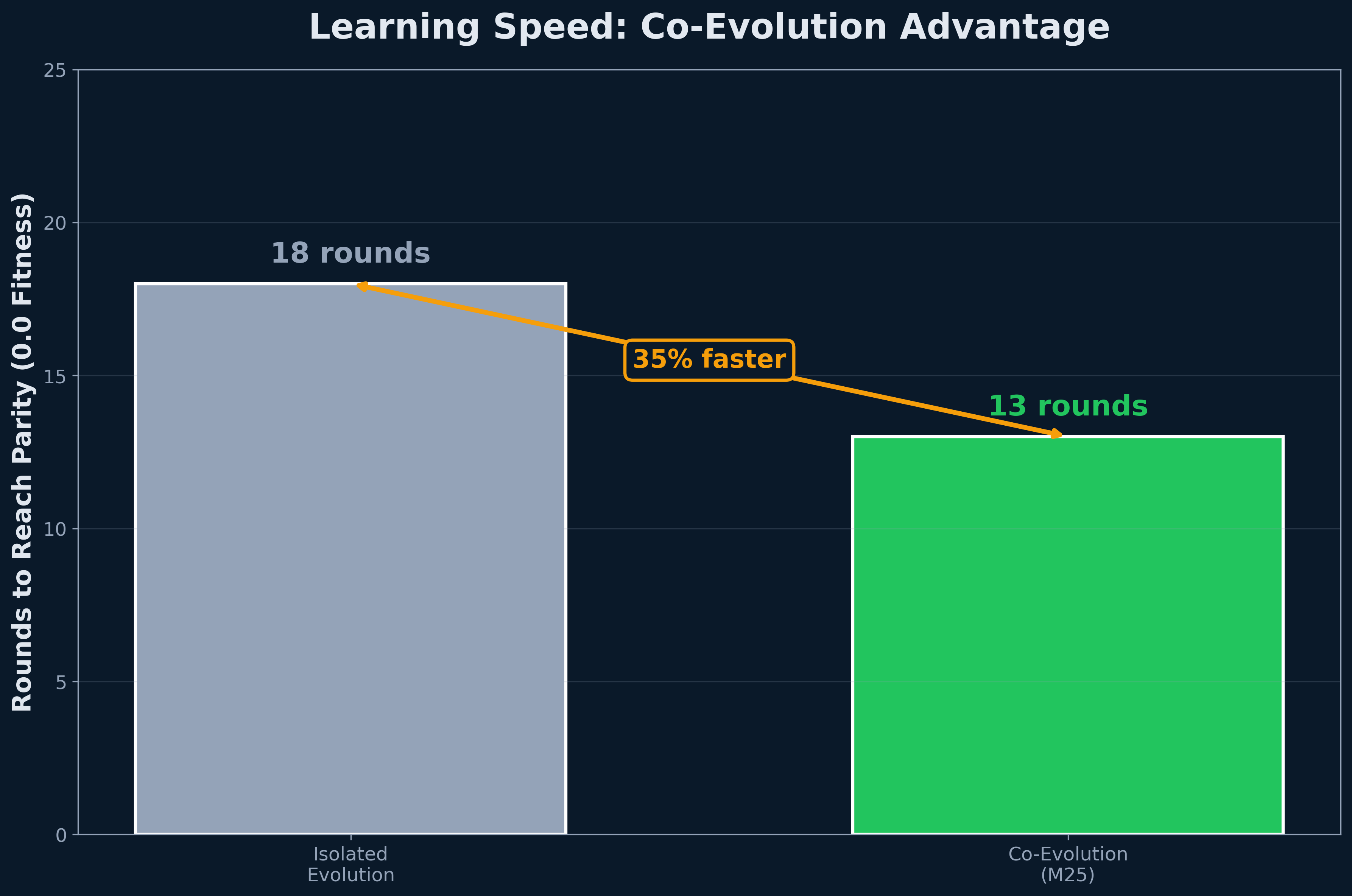

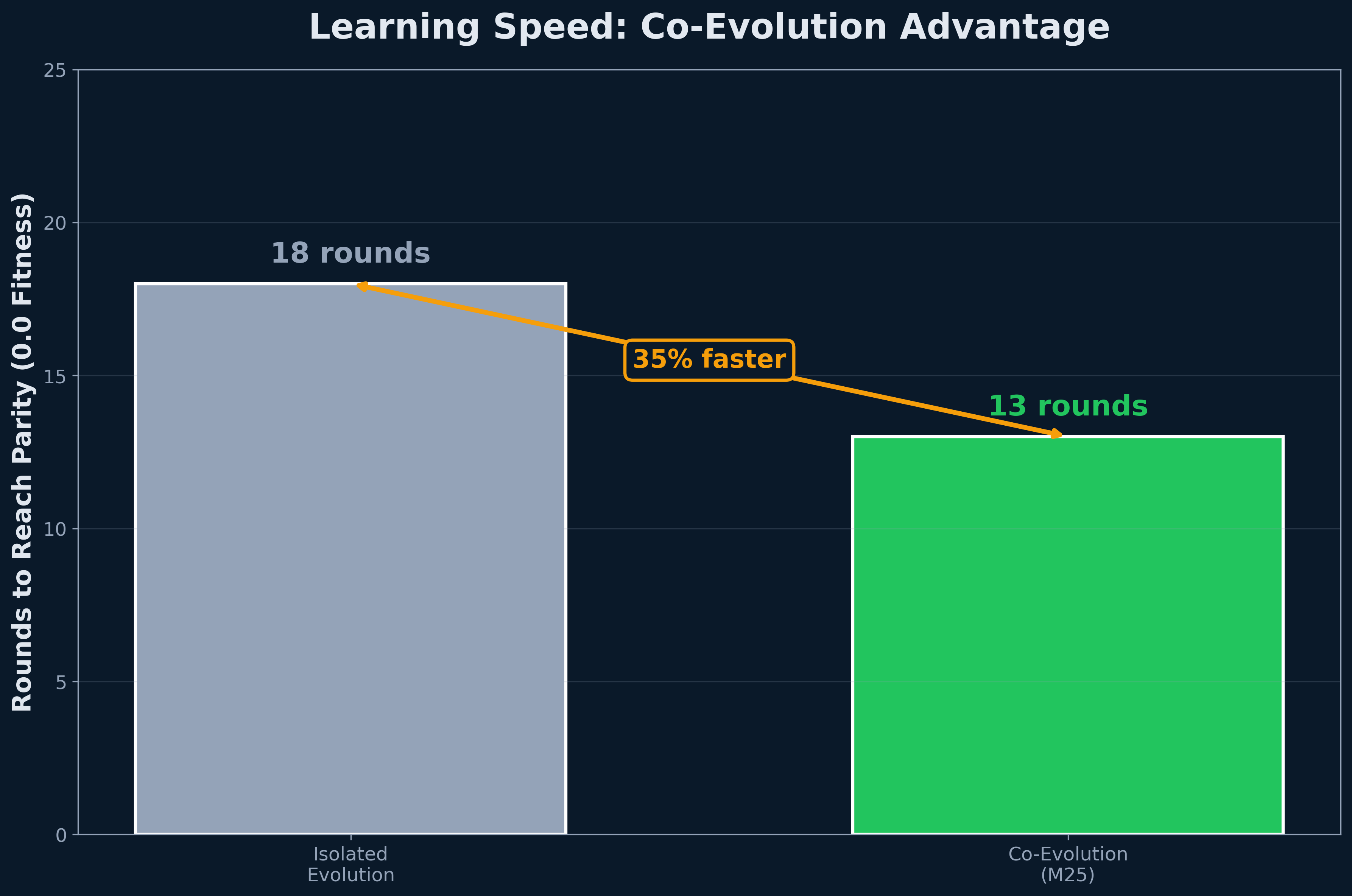

M25: 95-round competitive co-evolution

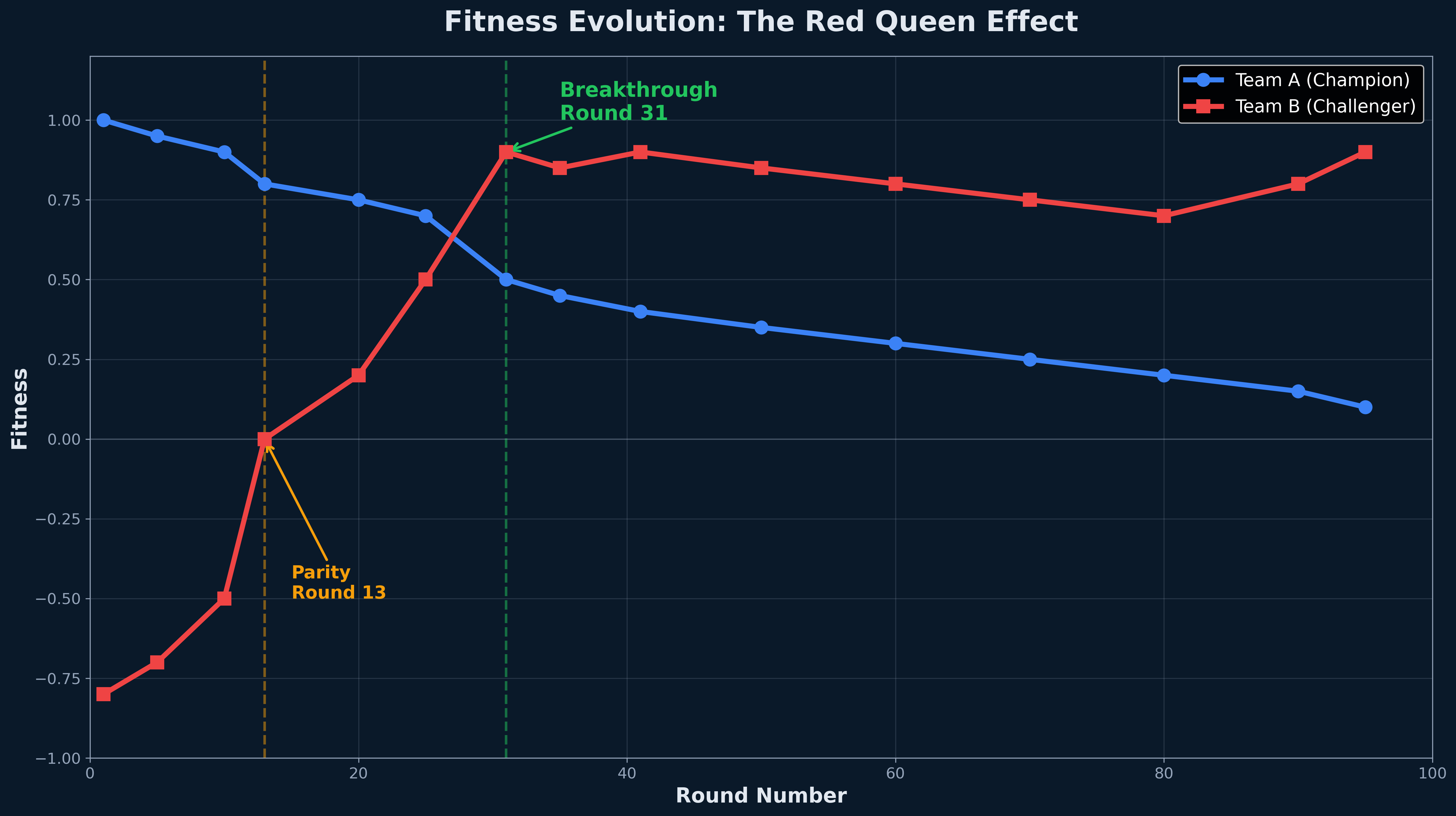

When both teams evolve against each other, weaker teams can discover counter-tactics that surpass initially stronger opponents.

Sonnet 4 (planner) + Haiku 4.5 (coder)

Rounds:

95 total · alternating teams (A: even, B: odd)

Matches:

10 per fitness evaluation

Acceptance:

Relative mode (champion − 0.05)

Reflection:

Strict (enhanced journal validation)

Budget:

~$10 · ~90 minutes · 1 consumer GPU

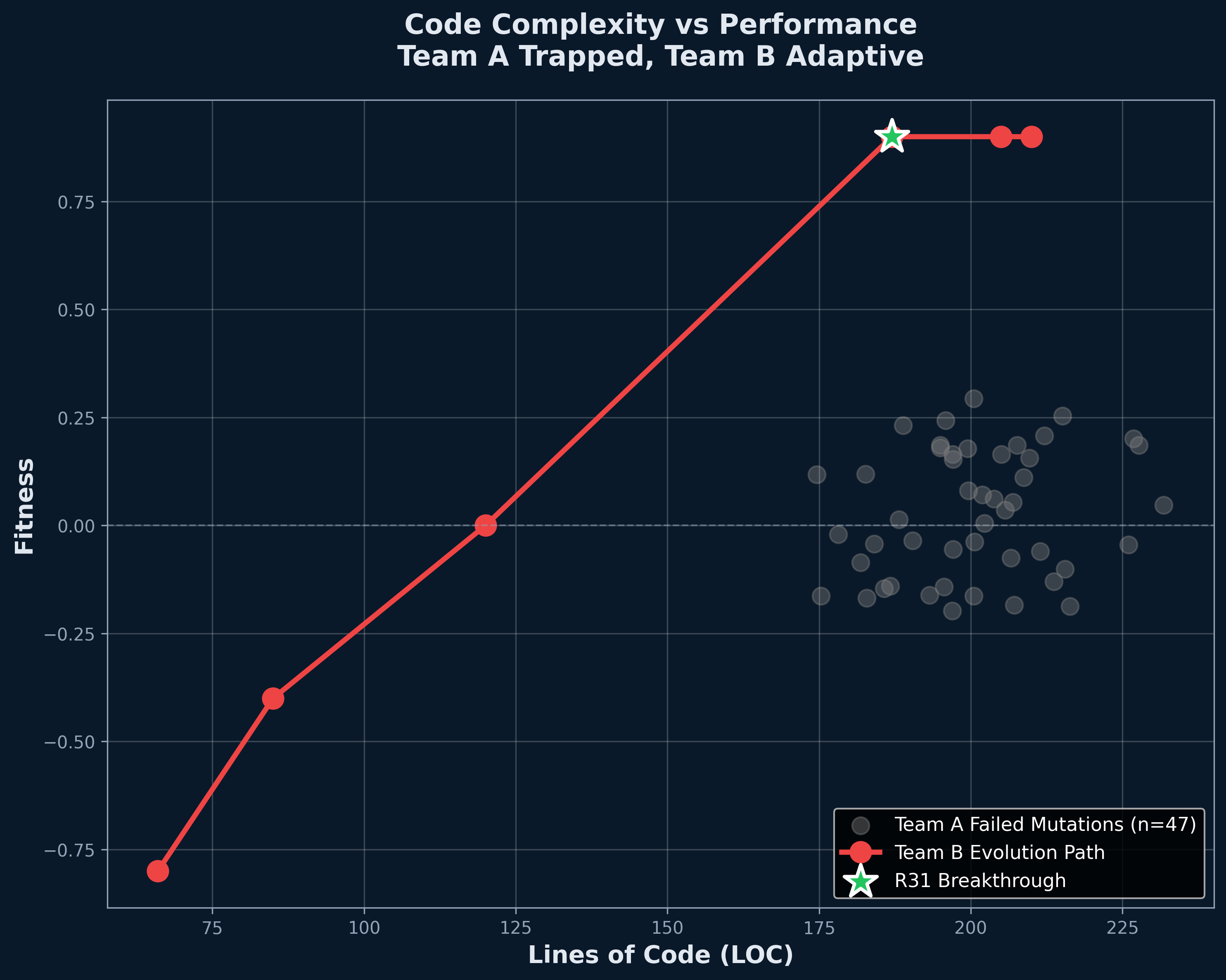

🔵 Team A — The Champion

Tactic: Claim-Arbitrated Targeting + Post-Shot Kite

Stats: 204 LOC · +1.0 fitness · 30/30 wins vs baseline

🔴 Team B — The Underdog

pursuit_v1 baselineTactic: Nearest-enemy pursuit · no coordination

Stats: 66 LOC · −0.8 fitness · losing 8 out of 10

Can a 66-line underdog evolve to beat a 204-line champion? Let's find out →

The Result: Underdog Wins

Evolution found what engineering didn't

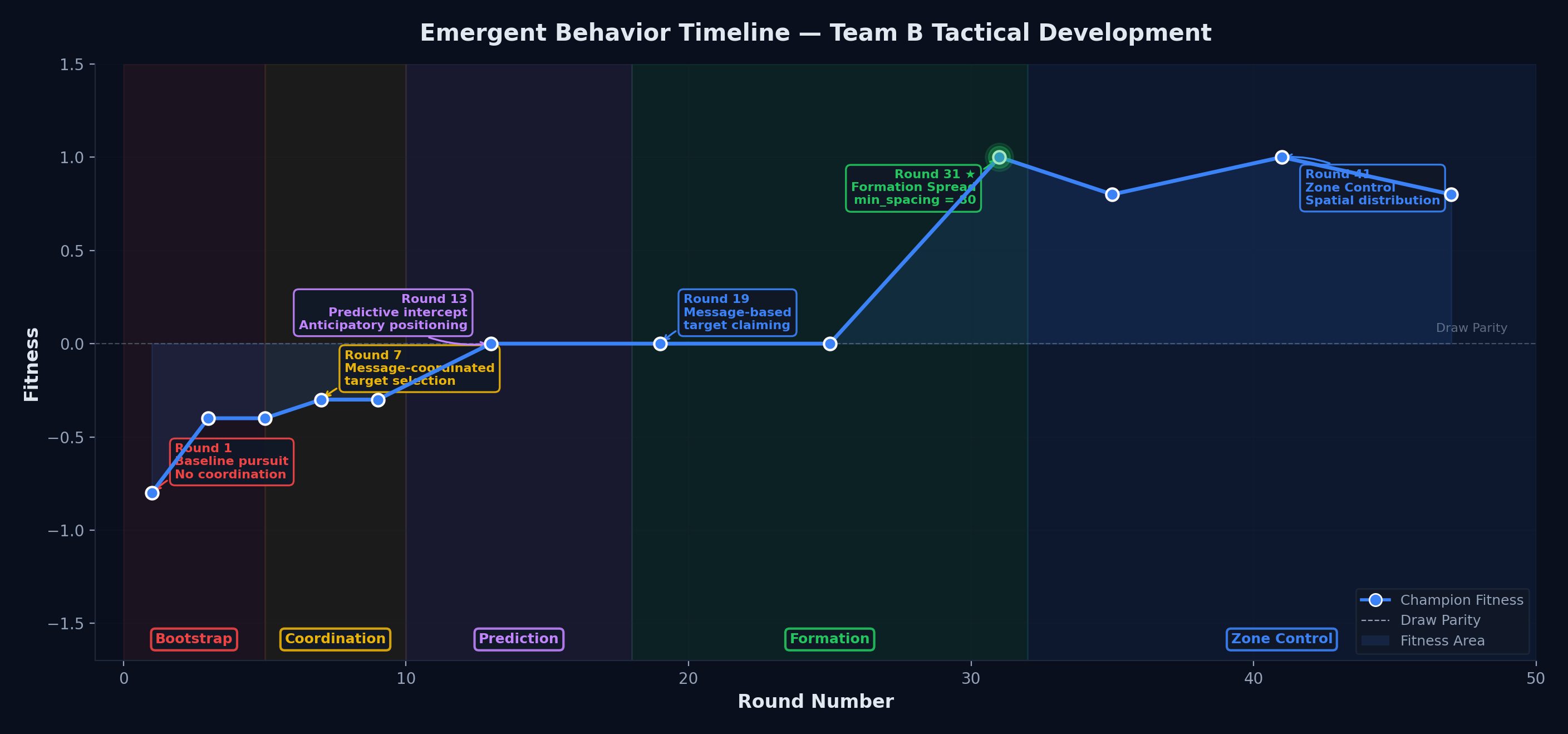

Team B (red) overtakes Team A (blue) at Round 31

🏆 Final Score

Team B went from losing 8 out of 10 battles to dominant winner — in 95 rounds of unguided evolution.

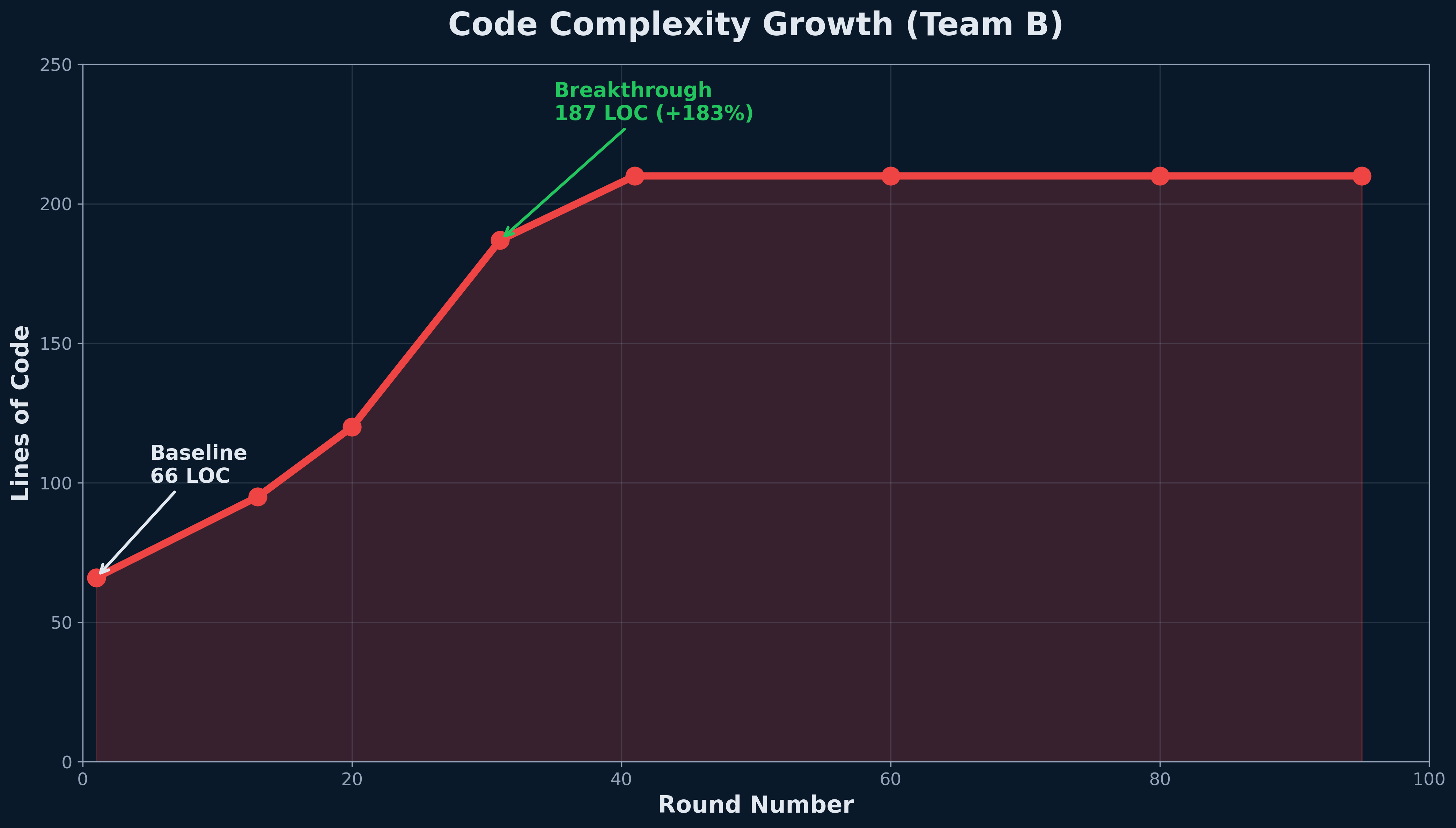

📈 Evolution Trajectory

💡 What Made the Difference

Formation Spread — a single parameter change:

min_spacing = 80

Drones stopped clustering, became harder to hit en masse. No human ever designed this tactic.

The recipe worked. Evolution found a way.

Emergent Behavior

Discovered, not programmed

These tactics have names because we observed them. The LLM didn't plan "zone control." Across both teams: 55 mutation attempts. 8 accepted. This is one of them.

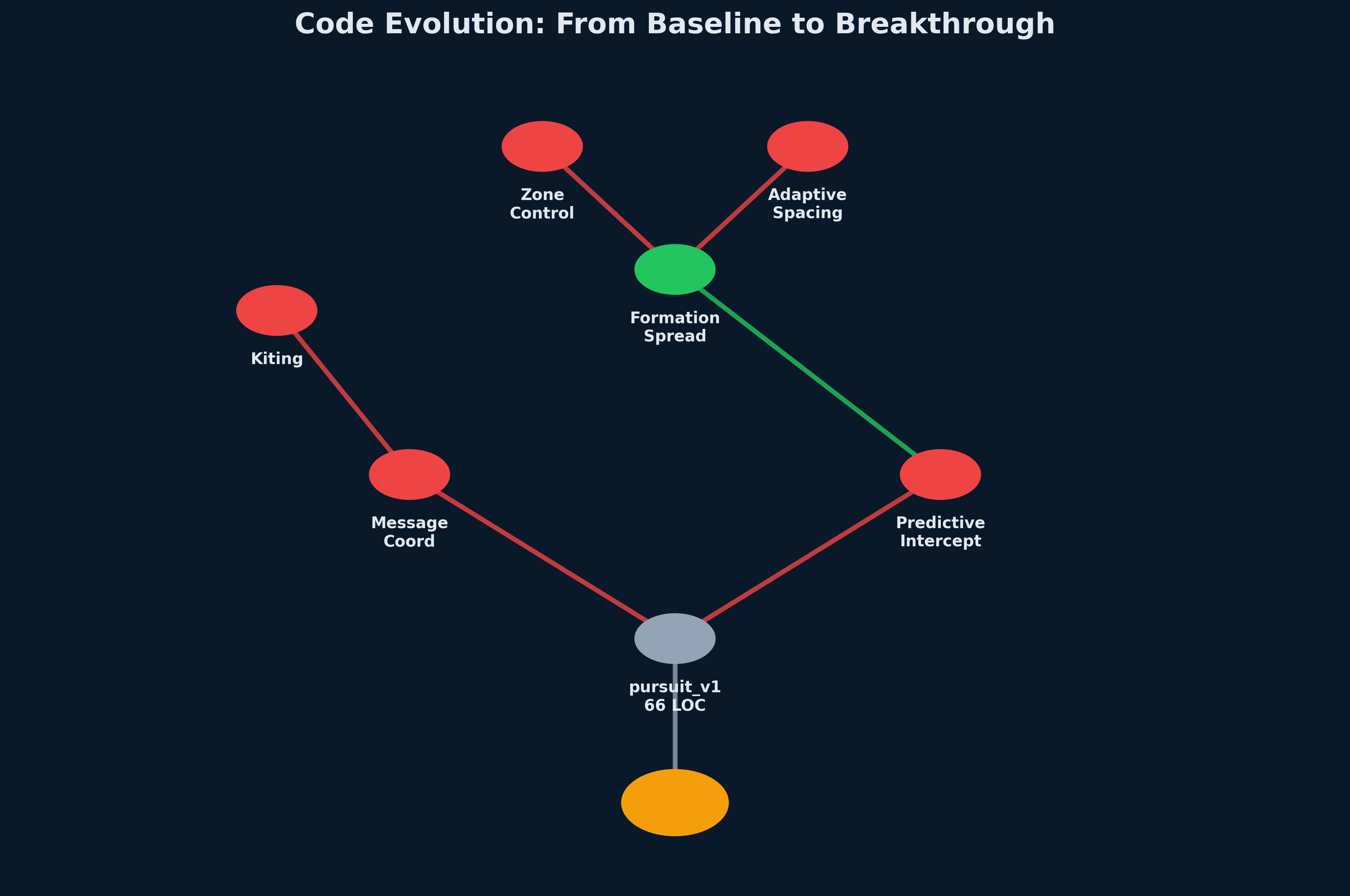

From Pursuit to Zone Control

Each phase added a capability — the breakthrough was their combination

"Learn to talk to each other"

Tactic: Message-Coordinated Targeting

What changed: Each drone broadcasts its intended target_id in message[2].

Why it helped: No more 5 drones piling onto 1 enemy while 4 others escape. Each drone claims a unique target.

"Aim where they'll be"

Tactic: Predictive Intercept Swarm

What changed: Each drone analyzes enemy positions and predicts their retreat vectors, then leads the shot.

Why it helped: Shooting at "now" misses a moving target. Leading the target hits it.

"Don't bunch up — own the space"

Tactic: Formation Spread → Zone Control with Baiting

What changed: Drones maintain 80-unit minimum spacing; formation covers 60% of the arena.

Why it helped: No friendly fire. Multiple firing angles. No escape lanes.

Communication alone couldn't win. Prediction couldn't dominate.

Evolution stacked them in order — and the combination was the breakthrough.

Code Archaeology

What Changed Between Round 1 and Round 31?

// Simple pursuit

for (int i = 0; i < num_enemies; i++) {

if (!enemies[i].alive) continue;

float dx = enemies[i].x - my_x;

float dy = enemies[i].y - my_y;

float dist = sqrtf(dx*dx + dy*dy);

if (dist < closest_dist) {

closest_dist = dist;

target_id = i;

move_x = dx;

move_y = dy;

}

}

// Normalize and move

float mag = sqrtf(move_x*move_x + move_y*move_y);

if (mag > 0.01f) {

move_x /= mag;

move_y /= mag;

}// Formation Spread with repulsion

float repulse_x = 0.0f;

float repulse_y = 0.0f;

for (int i = 0; i < num_allies; i++) {

if (i == my_id || !allies[i].alive) continue;

float dx = my_x - allies[i].x;

float dy = my_y - allies[i].y;

float dist = sqrtf(dx*dx + dy*dy);

const float min_spacing = 80.0f; // ← THE KEY LINE

if (dist < min_spacing && dist > 0.01f) {

float push_x = dx / dist;

float push_y = dy / dist;

float strength = (min_spacing - dist) / min_spacing;

repulse_x += push_x * strength;

repulse_y += push_y * strength;

}

}

// Combine pursuit + repulsion

move_x = 0.6f * pursuit_x + 0.4f * repulse_x;

move_y = 0.6f * pursuit_y + 0.4f * repulse_y;

One constant. 80 units. Emergent zone coverage.

The swarm didn't know it was inventing a strategy. It just... worked.

Evolution Engineering

A new discipline — designing the system that designs the code

Evolution doesn't just happen. Someone has to design the rules of the game: what counts as success, when a mutation gets accepted, how to keep the system from grinding to a halt. That person is an Evolution Engineer.

Genome Scope

"One shared codebase, or one per individual?"

Loop Topology

"Who evolves when — and how often?"

Fitness Function

"What exactly are we rewarding?"

Stagnation Defense

"How do we keep evolution from stalling?"

Mutation Bounds

"What's the LLM allowed to change?"

Termination

"When are we done?"

Six dials. Turn them differently — get a different evolution.

Decision 1: The Genome Question

One shared codebase, or one per individual?

Shared Genome

One C++ file represents the team. Every drone runs the same code. The whole "species" mutates as one unit.

Pros- Fast — one mutation, one compile, one test

- Simple comparison: A's code vs B's code

- Cheap to iterate ($10 for 95 rounds)

- Tiny "population" — high variance

- No genetic diversity to recombine

Independent Genomes

Each individual has its own code. A whole population evolves with diversity, sub-species, even crossover.

Pros- Genetic diversity → multiple strategies

- Robust to bad luck (one weak variant)

- Slow — many compilations per round

- Hard to credit-assign across variants

- Used by AlphaStar, NEAT — at $1M scale

We picked shared. Speed of iteration mattered more than diversity for one experiment.

Decision 2: The Evolutionary Loops

Two clocks tick at different speeds

Inner Loop — every round

The mechanic of mutation. Runs hundreds of times per experiment.

Outer Loop — across rounds

The shape of competition. Decides who is the opponent — and when.

Inner loop asks: "Did this mutation help?"

Outer loop asks: "Who is the opponent now?"

Decision 3: The Fitness Function

What you reward IS what you get

Raw Outcome

Simple. Honest.

⚠ Plateaus when both sides get good — no signal to climb past 50/50.

Shaped Reward

Richer signal early on.

⚠ Game-able — agent farms easy points instead of winning.

Relative Fitness

Adaptive — measures you against the current opponent, not a static yardstick.

✓ Prevents overfitting. Pairs naturally with co-evolution.

Decision 4: Preventing Stasis

How do you keep evolution from stopping?

Once a system finds a "good enough" answer, mutations stop helping. Without intervention, fitness flatlines.

Nature has five tricks. Engineers borrow them.

We bet on Red Queen pressure — alternating opponents, forcing each to keep adapting.

If the experiment had stalled, we had four backup mechanisms ready.

Decisions 5 & 6: The Guardrails

What's allowed to change? When do we stop watching?

⚙️ Mutation Bounds

Maximum exploration. Risks compile failures, runtime crashes.

Focused, safe. May miss novel solutions outside the sandbox.

Compile-time inserts memory bounds, infinite-loop detectors.

LLM rewrites freely; we inject loop-guards & memory bounds at compile. No infinite loops. No leaks. No black-box crashes.

🛑 Termination Criteria

Predictable cost. Easy to compare experiments.

Saves money on dead runs; risks premature stop.

Clear success criterion; assumes you know the goal.

Let it run. Cheap if you have spare compute.

Predictable, re-runnable for ablation studies, fits a lunch break.

Bounds = "what" can change. Termination = "when" we stop watching.

The Future of Evolutionary Code

If we're right, this is just the beginning

🌐 Co-Evolving Microservices

Frontend and backend evolve together, optimizing for latency, throughput, cost. APIs compete for efficiency.

🛡️ Immune-System Software

Code that adapts to attacks in real time. Firewall rules evolve against adversarial traffic patterns.

🔧 Evolutionary Debugging

Mutate code until tests pass. Fitness = % tests green. Let evolution fix bugs while you sleep.

🤝 Symbiotic Codebases

Modules co-evolve for mutual benefit. Database queries optimize alongside indexing strategies.

What if all software was alive?

Credits & Reproduction

Standing on the Shoulders of Giants

Intellectual Foundations

- Charles Darwin — Natural selection, Origin of Species

- Gregor Mendel — Genetics, inheritance mechanisms

- Stephen Jay Gould — Punctuated equilibrium (1972)

- Leigh Van Valen — Red Queen hypothesis (1973)

- Sewall Wright — Fitness landscapes (1932)

Tools & Technologies

- Claude Sonnet 4 — Mutation planner

- Claude Haiku 4.5 — Code writer

- OpenACC — GPU parallelization

- C++17 — Implementation language

Reproduce This Experiment

git clone https://github.com/leybzon/SwarmEvolve

cd SwarmEvolve

python3 scripts/evolve_coevolve.py \

--init-champion-a data/runs/m22_rq1_100gen/gen_0033/candidate.cpp \

--init-champion-b src/baselines/pursuit_v1.cpp \

--planner-model claude-sonnet-4-20250514 \

--coder-model claude-haiku-4-5 \

--rounds 100 --n-matches 10 --seed 42 \

--acceptance-mode relative --strict-reflection- GitHub: github.com/leybzon/SwarmEvolve

- License: MIT (open source)

Questions? Open an issue on GitHub or contact Gene Leybzon.